tldr: Most "best QA automation tools" lists rank by feature checklist. We rank by buyer. Solo founder, dev team without QA, small QA team of 1–3, or ready to outsource entirely. Twelve tools across four tiers, each judged on where it falls apart, not just where it shines. The pattern from 200+ Forward Deployed Engineer onboardings: tools that win the Week 1 demo lose Week 6 production. The future of QA automation is outcome-based, not tool-based. That's what we built Bug0 to deliver.

We're going to break a rule

Most lists like this rank tools 1 to 12. We're not doing that.

Ranking QA automation tools globally is a fiction. The "best" tool for a solo technical founder shipping to 50 users is not the "best" tool for a 40-person engineering org with a regulated checkout flow. Anyone who tells you otherwise is selling something. Usually their own tool, slotted at #1.

We run a forward-deployed QA team at Bug0. We've onboarded 200+ engineering teams. Almost every one came to us off another QA automation tool. The pattern is so consistent we built this list around it: tools fail at predictable points, and those points are tied to who is using the tool, not what the tool can do.

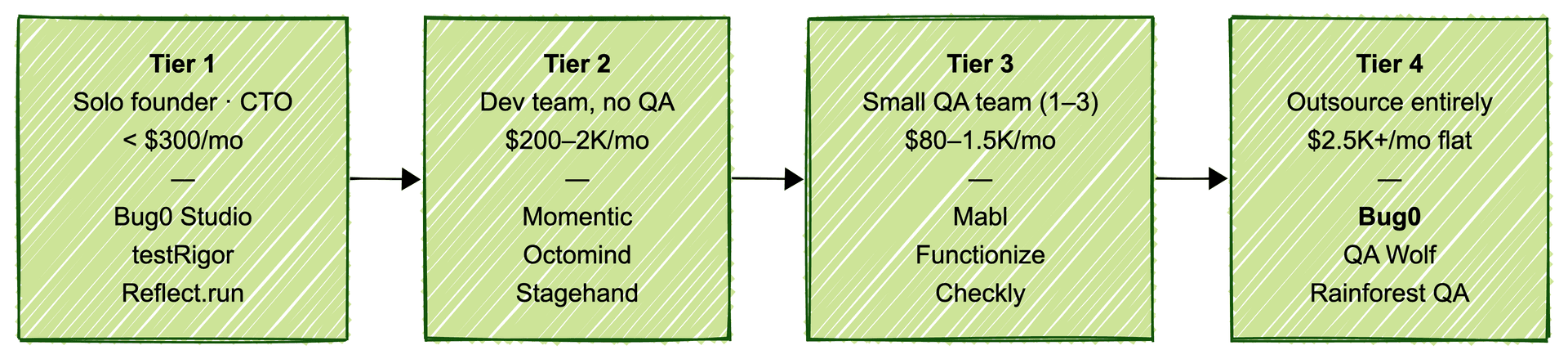

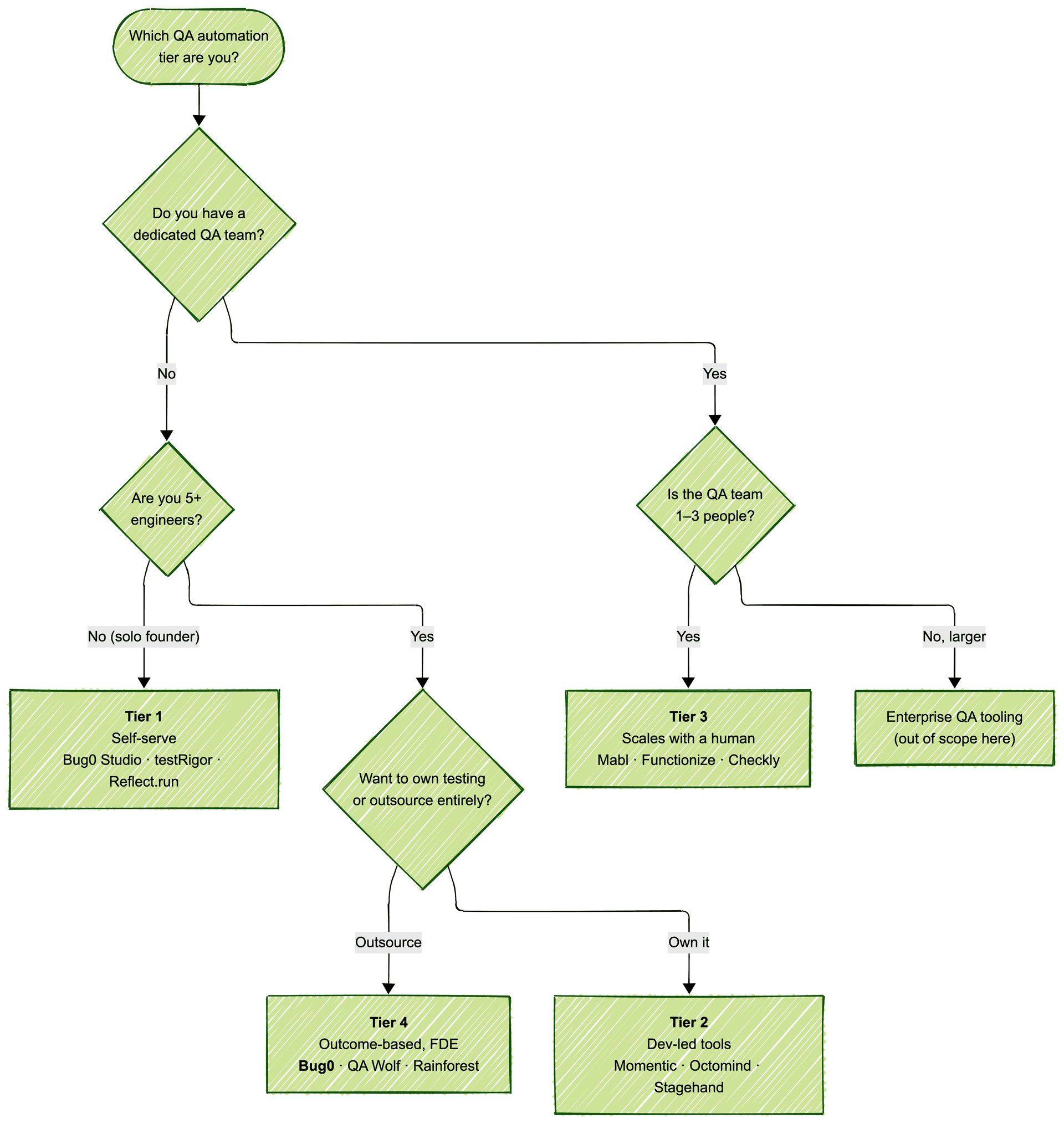

So this list is ranked by buyer. Four tiers, three tools each:

| Tier | Who you are | What you actually need |

|---|---|---|

| Tier 1 | Solo founder or technical CTO | Cheap, fast, "good enough" coverage you can run yourself |

| Tier 2 | Dev team without dedicated QA | Self-serve test automation tools that don't demand a QA hire |

| Tier 3 | Small QA team (1–3 people) | A platform that scales with a human, not against them |

| Tier 4 | Engineering org that wants to stop testing entirely | Done-for-you outcomes, flat monthly billing, zero maintenance |

If you're in the wrong tier, every tool in this list is wrong for you. That's the entire point.

We also broke a second rule. Most 2026 lists name the same eight tools. Ours adds three you won't find on those lists: Reflect.run, Stagehand, and Checkly. These tools shipped faster than most listicles updated. We see them in the field; the SERPs don't yet.

What QA automation actually means in 2026

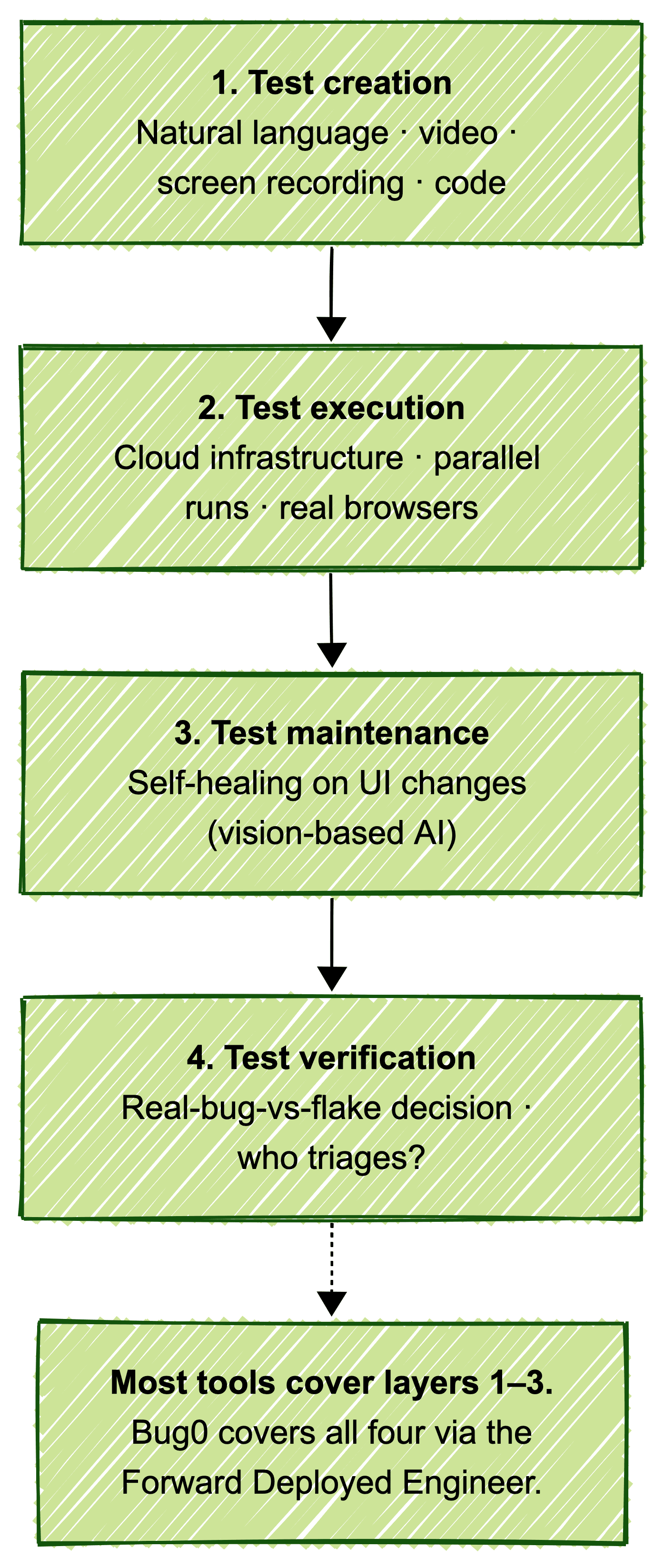

QA automation in 2026 is no longer "Selenium scripts running in Jenkins." It's a four-layer QA automation framework:

-

Test creation: natural language, video, screen recording, or code

-

Test execution: cloud infrastructure, parallel runs, real browsers

-

Test maintenance: self-healing when the UI changes, ideally without a human touching anything

-

Test verification: someone (or something) deciding whether a failure is a real bug or a false positive

Every tool below covers some subset of these four layers. The mistake teams make is buying a tool that covers two layers and then discovering, three months in, that the missing two layers are exactly the ones eating their engineering time.

For a deeper walkthrough of how this stack works in production, see our guide to AI for QA testing and the breakdown of why AI testing tools alone won't fix QA.

Tier 1: Solo founders and technical CTOs

You wrote the product. You'd rather write more product. You want QA automation tools that get out of your way, cost under $300/month, and don't require a learning curve longer than a Saturday afternoon.

The trap in Tier 1 is buying enterprise-grade tooling because it looks legitimate. It will sit unused.

Bug0 Studio

Best for: Founders and full-stack devs who want to describe tests in plain English and drive their own QA.

Where it falls apart: If you have an existing 500-test Playwright suite you want to keep, Studio doesn't import legacy code; it replaces the workflow. Once your app crosses ~50 critical flows, most teams move to the Bug0 Forward Deployed Engineer model (see Tier 4).

Public claim: 500+ tests in under 5 minutes; 0% flake rate via vision-based self-healing.

Pricing: From $250/month, pay-as-you-go for test minutes.

Verdict: The same AI engine that powers Bug0's Forward Deployed Engineers, in self-serve form. Use it if you genuinely want to drive testing yourself.

"Bug0 is the closest thing to plug-and-play QA testing at scale. Since using it at Dub, it's helped us catch multiple bugs before they made it to prod."

Steven Tey, Founder, Dub

testRigor

Best for: Solo technical builders who want to write tests in pure plain English without the Playwright abstraction underneath.

Where it falls apart: Test runs can be slow, debugging is opaque, and you're locked into testRigor's runtime. We see customers spend ~3 hours per week debugging failures the tool can't explain.

Public claim: "100x faster test automation, 99.5% maintenance reduction" (vendor-stated).

Pricing: Custom. Typically starts around $300/month for the individual tier.

Verdict: Strong if your only criterion is "no code." Weaker if you ever want to inspect or extend the underlying logic. See our testRigor alternatives breakdown.

Reflect.run

Best for: Founders who want a recorder that actually heals. Ghost Inspector evolved.

Where it falls apart: Recorder-first model still struggles with multi-tab flows and SSO redirects. Solid for single-tab user journeys, weaker the moment your app opens a new window.

Public claim: No-code, AI-powered self-healing, parallel cloud runs.

Pricing: From ~$153/month team plan.

Verdict: The fresher recorder. Pick this over older recorder tools in 2026.

Why we list it when nobody else does: We see it on customer onboardings, but it's missing from every other "best QA automation tools" listicle. That's a listicle-world oversight, not a tool one. Reflect's team has shipped more recorder updates in the last 18 months than legacy recorder vendors combined.

Tier 2: Dev teams without dedicated QA

You're 5–25 engineers. You don't want to hire QA. You want a tool a senior dev can own in two hours per week. The trap in Tier 2 is buying the cheapest thing on G2. Most of those tools assume a QA admin who doesn't exist on your team.

Momentic

Best for: PR-gate testing in dev-led shops shipping multiple times a day.

Where it falls apart: Their natural-language layer drifts as test count grows past ~100. Performance and reliability both degrade on nested modal flows.

Public claim: 70% less automation time at Notion, 8x release cadence at Retool, 99% fewer false positives at Webflow.

Pricing: Custom, typically ~$500–2,000/month.

Verdict: Strongest dev-first AI tool in this tier. Hits a complexity ceiling. See Momentic alternatives.

"Bug0 integrates seamlessly into our workflow and delivers instant value. The automated test coverage gave us confidence to ship faster while maintaining quality standards."

Tomer Barnea, Co-Founder, Novu

Book a demo

Octomind

Best for: Teams that want zero-config AI test generation on greenfield apps.

Where it falls apart: Multi-step authentication and multi-tenant flows expose the limits of its discovery engine. Strong on the happy path, weaker on conditional paths.

Public claim: 99% test success rate, 78% fewer false positives, 50% less debugging time, 5-minute setup.

Pricing: From ~$199/month.

Verdict: Cleanest 5-minute onboarding in the category. Plateaus the moment your app has real complexity. See Octomind alternatives.

Stagehand by Browserbase

Best for: Dev teams that want agentic browser automation as a TypeScript SDK they fully control.

Where it falls apart: It's a framework, not a managed service. There's no UI, no run history dashboard, no built-in CI integration. You wire all of that yourself.

Public claim: Deterministic agent actions on top of Playwright; act / observe / extract primitives.

Pricing: Free open source. Browserbase infrastructure runs roughly $0–500/month for typical usage.

Verdict: The right pick if your dev team treats QA as code, not a product. Pair it with your own CI and you have a controllable agentic-test layer.

Why we list it when nobody else does: Every other 2026 listicle missed the agentic SDK era. Stagehand is the most production-ready of the new wave. We compared it head-to-head against the alternatives in Expect vs Browser Use vs Stagehand vs Passmark. Required reading if you're picking in this category.

Tier 3: Small QA teams (1–3 people)

You have a QA lead. Maybe two. Their job is to scale coverage without becoming a 10-person team. The trap in Tier 3 is buying enterprise QA platforms or generic QA management software that turns your QA lead into a tool admin. The right QA software tools at this tier scale with a human, not against one.

Mabl

Best for: QA teams who want a mature, polished AI-augmented platform with enterprise-grade reporting.

Where it falls apart: Heavy onboarding curve. Your QA lead becomes a Mabl admin: most of week one is workspace setup, not test creation. UI-change healing works; logic-driven UX shifts still need manual intervention.

Public claim: AI-powered self-healing, journey-based test design, integrated CI/CD.

Pricing: Custom, typically starts around $1,500/month.

Verdict: The safe enterprise-grade choice. Slower to value than newer AI-native tools in this tier.

Functionize

Best for: Small QA teams inside larger orgs needing risk-based coverage and visual regression at the same time.

Where it falls apart: 1–3-person teams drown in feature surface area. Built for QA orgs of 10+; the surface complexity is a tax on smaller teams.

Public claim: 99.97% element recognition, 90% faster test creation, 40 hr → 4 hr testing at GE Healthcare.

Pricing: Enterprise / custom.

Verdict: Powerful, over-built for this tier. Consider only if you're growing fast into Tier 4. See Functionize alternatives.

Checkly

Best for: QA + SRE hybrid teams. End-to-end testing plus synthetic monitoring in one platform.

Where it falls apart: Test creation is still code-first (Playwright Test under the hood). Non-coders need help. If your QA team is non-technical, this is the wrong pick.

Public claim: Monitoring-as-code, runs Playwright Test natively, 25+ global regions.

Pricing: From $80/month team plan.

Verdict: The most underrated entry on this list. If your QA and on-call worlds overlap, which they do at most growth-stage startups, this is the answer.

Why we list it when nobody else does: Every QA-tools listicle ignores Checkly because it sits between QA and SRE categories. That makes it perfect for the small-QA-team tier. Your one or two QA people already wear both hats whether they admit it or not.

Tier 4: Teams that should outsource entirely

This is the strongest opinion in the piece, so we'll state it plainly: if you're shipping weekly, you don't have a QA team, and you don't want one, every tool above is a maintenance burden waiting to happen.

The category that fits is QA automation services, also called outsourced QA services, QA as a service, or managed QA services depending on who's selling it. The shape is the same: an outside team owns testing as an outcome, and you stop owning the QA stack. The best QA automation companies in this category deliver on a flat monthly subscription instead of an hourly consulting bill, which is why software QA outsourcing is shifting from staffing-shop economics to platform economics in 2026.

Stop buying tools. Buy outcomes.

Bug0

Best for: 20–500-engineer B2B SaaS without QA headcount, who want outcomes instead of another tool to manage.

What it is: A dedicated Forward Deployed Engineer plus the Bug0 AI platform, all-inclusive at one flat rate. Your FDE works in your Slack, in your timezone, in your sprint. They plan tests, run them, verify every result, file real bugs with repro steps, and gate releases. You stop touching the QA stack.

Public claim: 100% critical-flow coverage in 1–2 weeks. 100% full-application coverage in 4 weeks. 0% flake rate via vision-based self-healing.

Pricing: $2,500/month flat. 90-day pilots available. No man-hour billing, no AI-credit overages, no infrastructure surcharges.

Verdict: The default pick for this tier. An outcome-based service, not a tool subscription.

"Bug0 gives us the speed of AI-native automation with the accuracy and self-healing of human QA. Their hybrid approach is a game changer."

Jacob Lauritzen, Head of Engineering, Legora

QA Wolf

Best for: Mid-market and enterprise teams with bigger budgets and longer rollout timelines.

Where it falls apart: Custom contracts that typically land at $5,000+/month. Coverage timeline is 4 months to 80%, versus Bug0's 4 weeks to 100%. Best fit if you're already used to enterprise-procurement cycles.

Public claim: 80% coverage in 4 months, $10K/month saved per engineer at customers like Salesloft, Mailchimp, and Drata.

Pricing: Custom, typically $5,000+/month.

Verdict: Strong managed offering. Wrong economics for the startup/growth tier. See QA Wolf alternatives.

Rainforest QA

Best for: Teams that specifically want crowdtested human verification on top of automation.

Where it falls apart: Crowdtesting roots show. They're slower than automation-first managed services. Market position has been declining for two years.

Public claim: ~250 customers, $42.3M raised.

Pricing: Custom.

Verdict: Yesterday's leader in a category that has moved on. See Rainforest QA alternatives.

Honourable mentions

A few tools that didn't earn a tier slot but show up enough in buyer searches that they deserve a paragraph each.

-

Tricentis Tosca: model-based, 160+ technologies supported, $50K+ enterprise deals. Wrong fit for anyone reading this list.

-

Testsigma: 25M+ tests executed, G2 4.4/5. Cloud codeless platform; competitive at mid-market price points.

-

Virtuoso QA: 10x faster execution, 95% self-healing, Forrester Strong Performer 2024. Solid mid-market pick with weaker brand recognition than the leaders.

-

Passmark. We built this. Open-source AI regression testing on top of Playwright. We didn't put it in a tier because it's not a SaaS; it's the engine that powers Bug0 Studio. We open-sourced it because we wanted teams to inspect every part of the system that runs their tests. See why we open sourced Passmark and the GitHub repo.

We also deliberately excluded two categories most listicles confuse with QA automation:

-

Selenium, Playwright, and Cypress are frameworks, not tools. They're the substrate other tools build on.

-

BrowserStack and LambdaTest are pure infrastructure. They run tests; they don't create or maintain them. See BrowserStack alternatives and LambdaTest alternatives if that's what you actually need.

How each tool covers the four-layer framework

Before the vendor-claim breakdown, here's the matrix every other listicle skips: which tools actually cover all four layers of the QA automation framework, and which only cover one or two. This is the table you'd build for an internal RFP.

| Tool | Create | Execute | Maintain | Verify |

|---|---|---|---|---|

| Bug0 (FDE) | ✓ | ✓ | ✓ | ✓ |

| Bug0 Studio | ✓ | ✓ | ✓ | ◐ |

| testRigor | ✓ | ✓ | ◐ | ✗ |

| Reflect.run | ✓ | ✓ | ◐ | ✗ |

| Momentic | ✓ | ✓ | ✓ | ✗ |

| Octomind | ✓ | ✓ | ◐ | ✗ |

| Stagehand | ◐ | ✗ | ✗ | ✗ |

| Mabl | ✓ | ✓ | ◐ | ✗ |

| Functionize | ✓ | ✓ | ✓ | ✗ |

| Checkly | ◐ | ✓ | ◐ | ✗ |

| QA Wolf | ✓ | ✓ | ◐ | ✓ |

| Rainforest QA | ◐ | ✓ | ✗ | ✓ |

Legend: ✓ full coverage · ◐ partial · ✗ none.

Tools that show partial or no coverage on layer 4 (verification) are the ones where teams hit the Week 6 wall. Flaky failures, nobody to triage them.

Vendor claim vs Forward Deployed Engineer reality

Every vendor publishes a hero metric. Some are real, some are technically true but misleading, and some are marketing gloss. Here's what we see in the field:

| Tool | Vendor's headline claim | What we see in customer onboardings |

|---|---|---|

| testRigor | "99.5% maintenance reduction" | Customers still spend ~3 hrs/week debugging opaque failures |

| Reflect.run | "AI heal, parallel runs" | Heals well on selector drift; struggles on full-flow restructure |

| Momentic | "70% less automation time" | True for first ~80 tests; flatlines after |

| Octomind | "99% test success rate" | True on greenfield; complex auth flows drag it down |

| Stagehand | "Deterministic agentic actions" | Verified, but you're writing the harness yourself |

| Mabl | "AI-powered self-healing" | Heals UI changes; struggles with logic-driven UX shifts |

| Functionize | "99.97% element recognition" | Element recognition ≠ test reliability. Different metric. |

| Checkly | "Monitoring-as-code" | Confirmed, but only true if your team can write Playwright |

| QA Wolf | "80% coverage in 4 months" | Confirmed across customers we onboarded after they churned |

| Bug0 | "100% in 4 weeks, 0% flake" | Verified across 200+ Forward Deployed Engineer engagements |

This table is what we wish someone had handed us when we were buyers.

Why most QA automation tools fail at Week 6

Almost every tool in this category demos beautifully. The failure modes show up later. Five patterns we see across customer onboardings:

-

The Week-1 Demo Trap. Every tool wins Week 1. That's what the sales engineer practiced. Real-world UI churn, dependency shifts, and feature-flag toggles break most of them by Week 6.

-

Self-healing isn't real healing. Most "self-healing" handles selector drift (a button got a new class name). It doesn't handle UX restructures (the button moved to a different page, or the flow added a step). The first kind of change is easy. The second is what actually breaks production tests.

-

Verification debt. A flaky test is worse than no test. It generates false confidence and trains your team to ignore failures. Tools without strong verification eventually create more drag than they remove.

-

The hidden QA hire. Tools that "don't require QA expertise" usually still require a tool admin: someone to maintain the workspace, triage failures, and update integrations. That's a QA hire wearing a different hat.

-

Pricing surprises at scale. Pay-per-test or pay-per-minute models punish you the moment your coverage works. The bill grows with your success.

The pattern hits hardest in the first two weeks of a Forward Deployed Engineer onboarding. A growth-stage team arrives off a popular AI-native testing tool. Suite of a few hundred tests. Double-digit flake rate. Bringing flake to zero usually takes a couple of weeks, but most of that time isn't healing. It's rebuilding tests from scratch, because the existing ones were healing on selectors that no longer matched user intent. That gap between "selector heal" and "intent heal" is what kills tools at Week 6.

"With Bug0, regression testing became effortless. They update tests as fast as we ship, so we can release with confidence every time."

Mohak Singh, Director of Engineering, Bridgetown Research

The honest read: most QA automation tools are sold to a buyer that can absorb the failure modes. Tier 1 and Tier 2 buyers (the ones reading this list) usually can't.

The future: outcome-based QA, run by Forward Deployed Engineers

The shift we see in 2026: more teams are buying outcomes instead of tools. The reasoning is simple. A fully-loaded QA engineer costs $130–150K/year. The tool license adds $5–15K. The cloud infrastructure adds another $3–10K. And your engineering team still spends 30–50% of its time on bug rework.

The model that's winning is the Forward Deployed Engineer. An engineer assigned to your team, working in your Slack and your sprint, owning the QA outcome end-to-end. That's what Bug0 delivers, and it collapses the whole stack into one flat subscription.

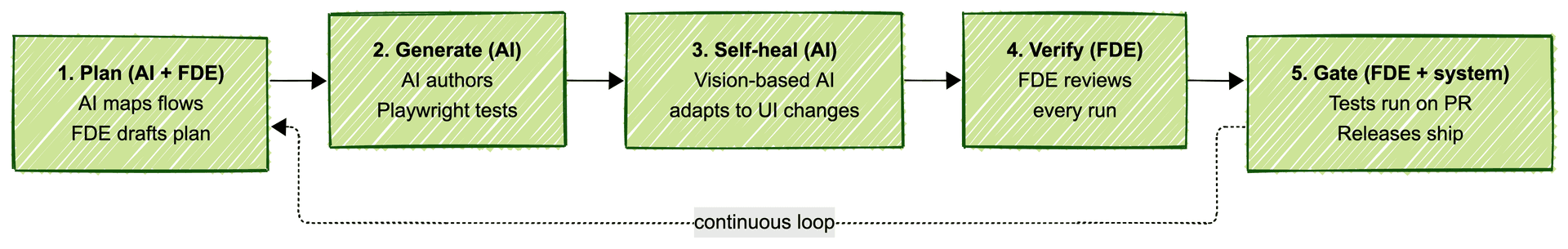

The hybrid loop looks like this:

-

Plan: AI maps your critical user flows; the Forward Deployed Engineer drafts the test plan with you

-

Generate: agentic tests authored via the Bug0 AI engine

-

Self-heal: vision-based AI adapts to UI changes deterministically; flakes caught early

-

Verify: human eyes on every run; the Forward Deployed Engineer files real bugs with repro steps

-

Gate: tests run on every PR and deploy; releases ship with confidence

This is also why we open-sourced Passmark, the AI testing engine that runs underneath Bug0. You can inspect every part of the system that runs your tests. Most managed-QA vendors won't show you what's inside the box. We shipped the source.

The cost comparison most teams haven't run for themselves:

| What you'd need without Bug0 | Annual cost |

|---|---|

| 1 QA engineer (fully loaded) | $130–150K |

| Test automation tool license | $5–15K |

| Cloud test infrastructure | $3–10K |

| Maintenance & flake debugging (30–50% of dev time) | Opportunity cost |

| Total | $150K+/yr |

| Bug0 (Forward Deployed Engineer, all-inclusive) | $30K/yr |

If this matches your situation, see how it works or book a demo. Larger orgs with compliance and SLA requirements get dedicated Forward Deployed Engineers, custom SLAs, and SOC 2 coverage.

Prefer to drive it yourself? Bug0 Studio is the same AI engine in self-serve form, from $250/month.

FAQs

What is QA automation?

QA automation is the practice of using software, AI, and human verification to test web and mobile applications without manual click-through. In 2026 it spans four layers: test creation, execution, maintenance, and verification. Modern QA automation tools handle the first three layers. QA automation services like Bug0 cover all four with a Forward Deployed Engineer in the loop.

What are some QA automation tools?

The most-searched QA automation tools in 2026 include AI-native platforms (Bug0 Studio, Momentic, Octomind, Mabl, testRigor, Functionize), agentic SDKs (Stagehand, Browser Use), recorders (Reflect.run), monitoring-led platforms (Checkly), and managed services (Bug0, QA Wolf). The right pick depends on your team type, not the feature list.

What are the top 5 automation tools?

For most growth-stage teams in 2026, the top five are Bug0, Momentic, Octomind, Mabl, and Checkly. Each fits a different buyer tier. See the breakdown above for which one matches your team.

What is the most demanding automation testing tool?

Tricentis Tosca and Functionize have the steepest learning curves and the largest surface areas. Both are built for enterprise QA orgs. Smaller teams drown in the feature complexity. Lighter-weight options like Bug0 Studio, Octomind, or Reflect.run get you running in minutes instead of weeks.

What are the 4 pillars of automation?

The four-layer QA automation framework is creation, execution, maintenance, and verification. Most tools cover two or three layers strongly. The mistake teams make is buying a tool that covers two layers and discovering three months in that the missing layers are the ones eating their engineering time.

What's the difference between QA automation and test automation?

Test automation is the narrow act of running automated tests. QA automation is the broader practice. It includes test creation, execution, maintenance, verification, and the human and AI workflows around all four. Tools that only run tests are "test runners." Tools that handle the full cycle are QA automation tools.

How does Bug0 fit in?

Bug0 is the Forward Deployed Engineer path for teams that want outcomes instead of tooling. A dedicated engineer plus the Bug0 AI platform, all-inclusive, $2,500/month flat. 100% coverage on critical flows in 1–2 weeks, 100% full app in 4 weeks. Same AI engine in self-serve form is Bug0 Studio, from $250/month.

Conclusion

Your team type picks the tool, not the feature list.

If you're a solo founder or technical CTO, pick something cheap and self-serve: Bug0 Studio, testRigor, or Reflect.run. If you're a dev team without QA, pick something that doesn't demand a QA hire: Momentic, Octomind, or Stagehand. If you're a small QA team, pick something that scales with a human: Mabl, Functionize, or Checkly. And if you're shipping weekly without a QA team and you don't want one, stop buying tools. Buy outcomes: Bug0, QA Wolf, or Rainforest QA.

By 2027, fewer teams will buy QA automation tools. More will buy outcomes. The platforms that survive will be the ones that combine AI agents with Forward Deployed Engineers verifying every run, all in one flat subscription.

See Bug0, the outcome-based Forward Deployed Engineer model, from $2,500/month flat.

For more patterns from our Forward Deployed Engineers, see the latest insights on AI QA.