tldr: Most engineering teams have never calculated the cost per bug caught by their regression test suite. When you do the math, the curve is brutal. Maintenance costs scale linearly with test count. Bugs caught plateau after your first few hundred tests. The crossover point, where you're spending more to maintain tests than the regressions are worth, hits sooner than you think.

The question nobody asks

How much does each regression test cost you?

Not the tool license. The fully loaded cost. Engineer time to write it. Maintain it when the UI changes. Debug it when it flakes. Re-run the pipeline when it fails. Triage whether the failure is real or noise. Multiply by 52 weeks.

Now divide by the number of real regressions your suite caught last quarter.

Most teams can't answer this. They know how many tests they have (it's a big number, and they're proud of it). They know their coverage percentage (it's in a dashboard somewhere). They don't know the cost per bug caught.

The ones who calculate it wish they hadn't asked.

I've seen teams spending $84K/year maintaining regression suites that catch 35-50 real bugs. That's $1,700-$2,400 per bug. For some of those bugs, the fix was a one-line CSS change.

This article is about the math. Run it on your own team. Then decide whether your regression suite is an asset or a liability.

What is regression testing

Regression testing is re-running existing tests after code changes to verify that new code didn't break existing functionality. You ship a feature. You re-run the suite. If something that worked before now fails, that's a regression.

The concept dates back to the 1960s. The practice became standard with the rise of automated testing frameworks in the 2000s. Today, regression testing is one of the most important practices in software engineering and one of the most expensive to maintain at scale.

Types of regression testing

-

Corrective regression testing. Re-running existing tests without modifications. The simplest form. Your code changed but your tests didn't.

-

Progressive regression testing. Updating tests to match new requirements. You changed the checkout flow, so the checkout tests need to change too.

-

Selective regression testing. Running only the tests affected by the code change. Faster than a full run. Riskier if your dependency mapping is wrong.

-

Complete regression testing. Running every test in the suite. The safest approach. Also the slowest. Most teams only do this before major releases.

What regression testing means in 2026

The regression testing definition hasn't changed. The context has. Teams now ship code 3x faster using AI coding tools. AI-generated PRs contain 1.7x more issues than human-written ones. More code, more bugs, more regressions.

Your regression test suite was sized for human-speed development. It wasn't built for the volume and velocity of AI-assisted codebases.

The regression testing ROI curve

This is the math nobody shows you.

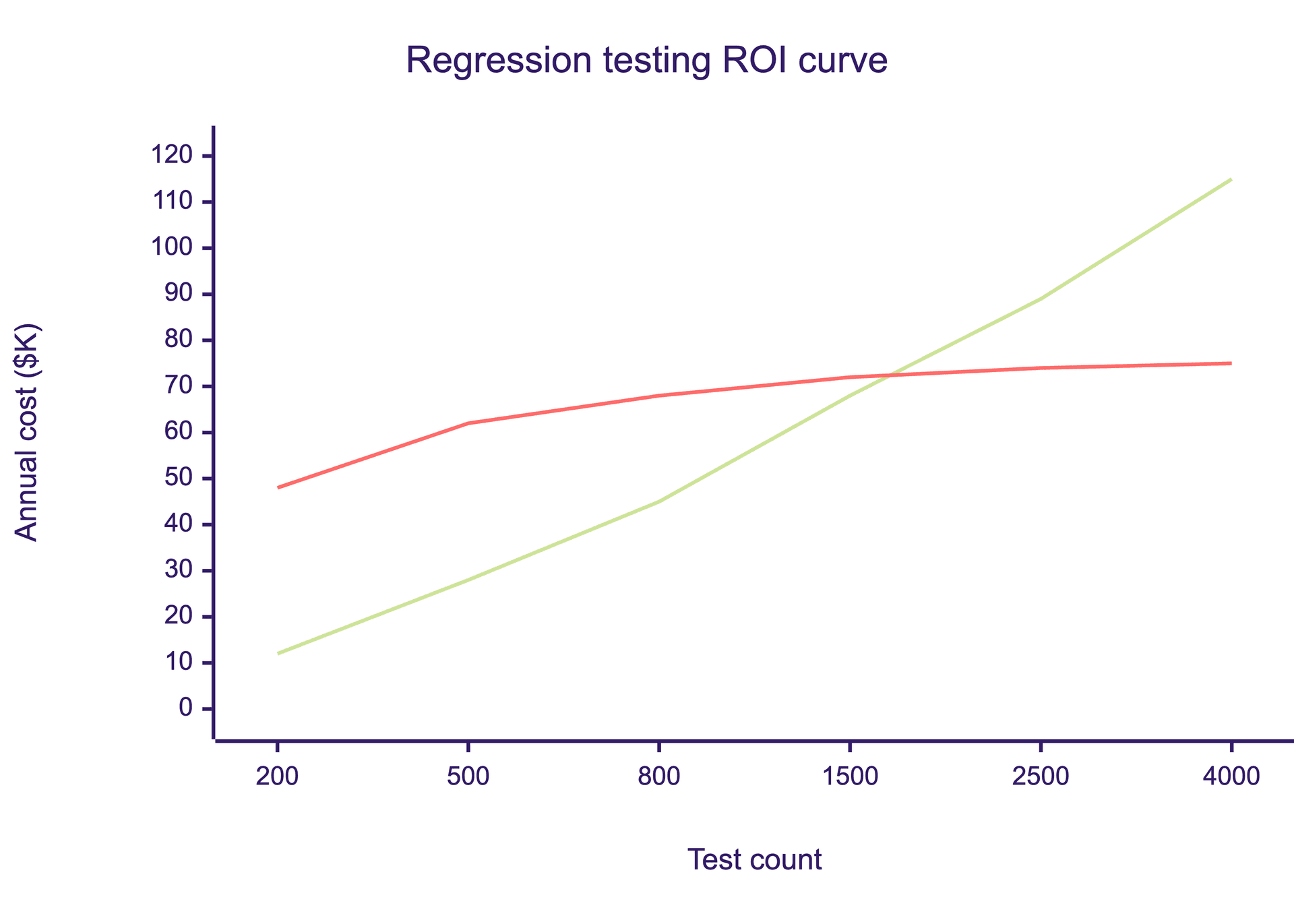

Your regression suite has two curves running in opposite directions.

Curve A: Maintenance cost. Scales linearly with test count. Every regression test you add costs roughly the same to maintain per quarter. Selectors break. Assertions drift. Flaky tests need debugging. CI pipelines need compute. One more test means one more thing to maintain, forever.

Curve B: Bugs caught. Follows a logarithmic curve. Your first 200 tests cover login, checkout, onboarding, and your core flows. They catch roughly 80% of the regressions that would hit production. The next 800 tests add secondary flows and edge cases. Maybe 15% more regressions caught. The next 2,000 tests cover increasingly obscure paths. Maybe 5% more.

The crossover point is where Curve A exceeds the value of Curve B. After that, every test you add costs more to maintain than the regressions it catches are worth.

For most teams shipping AI-generated code in 2026, that crossover hits around 500-800 tests. Everything past the crossover is insurance you're overpaying for.

Why teams don't see the crossover

Three reasons.

First, test count feels like progress. "We have 3,000 regression tests" sounds better in a board deck than "we have 300 regression tests." Nobody gets promoted for deleting tests.

Second, coverage percentage is misleading. 85% line coverage means nothing if the covered lines aren't the ones that break. You can have 95% coverage and miss the one payment flow that costs you $200K when it fails.

Third, nobody tracks bugs caught per test. Your CI dashboard shows pass/fail. It doesn't show "tests that caught a real regression this quarter vs. tests that did nothing but consume compute."

The six-month tax

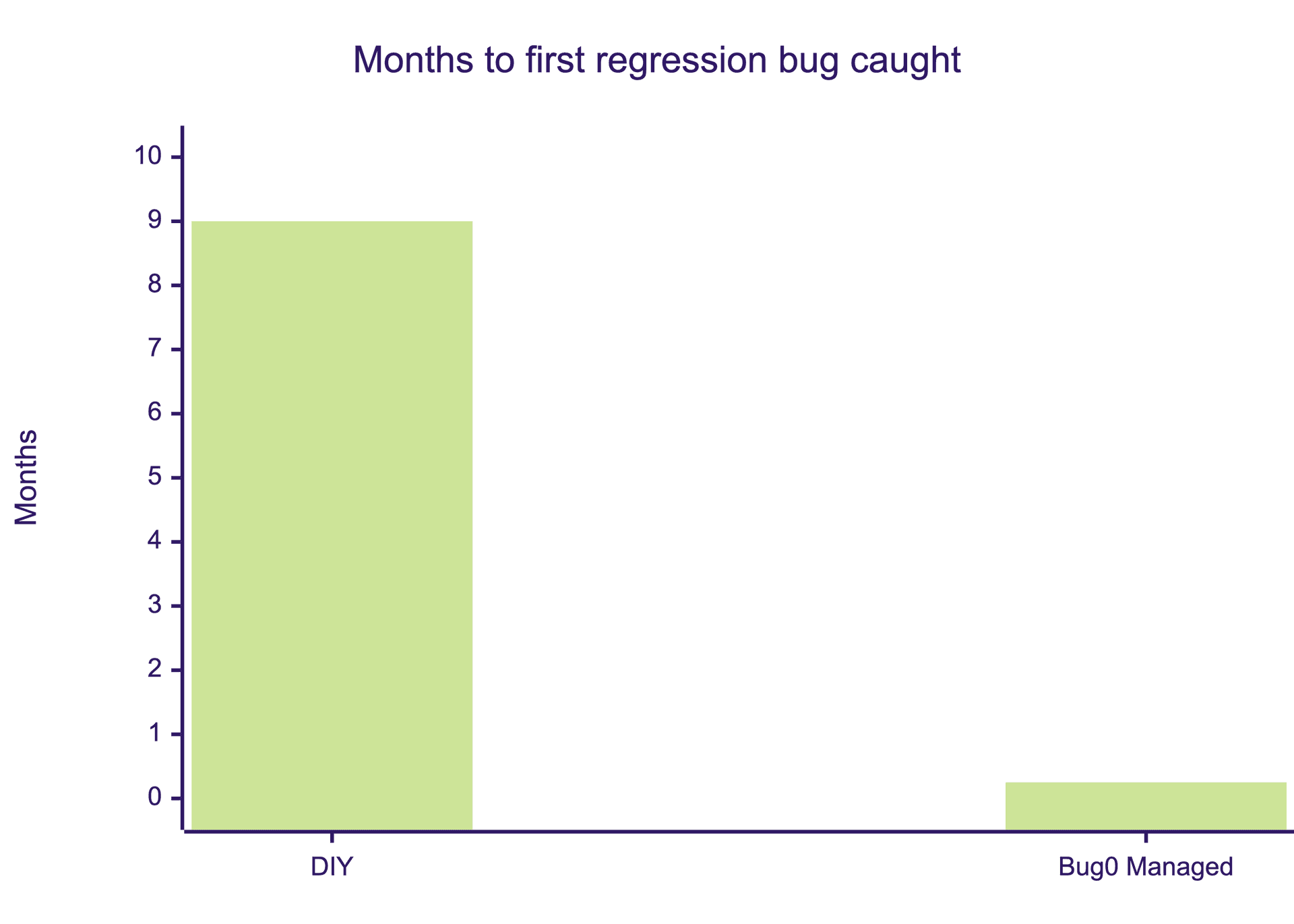

Before your regression suite catches its first bug, you pay a startup cost that most teams underestimate by 3-4x.

| Line item | Timeline | Cost |

|---|---|---|

| Hire a QA engineer | 3-6 months (job post, interviews, offer, notice period) | $15K-$25K in recruiting + zero output during search |

| Onboard and ramp | 2-3 months (learn codebase, product, existing tests) | $25K-$35K salary during ramp. Senior engineer at 25% capacity mentoring. |

| Evaluate and buy a tool | 2-4 weeks (POC, procurement, security review) | Engineering hours on evaluations nobody remembers |

| Write first 200 tests | 4-6 weeks | QA engineer time + developer pairing for complex flows |

| Integrate with CI/CD | 1-2 weeks | DevOps time, pipeline debugging |

| Total before first value | 6-9 months | $75K-$120K |

That's the optimistic scenario. You hired the right person on the first try. The tool POC worked. CI integration didn't break anything. The QA engineer didn't quit after three months because they spent all their time debugging flaky selectors instead of doing actual QA work.

During those 6-9 months, your team ships code unprotected. Regressions reach production. Customer trust erodes. Your engineers manually test before merging because they don't trust the (nonexistent) suite. The hidden cost of that period compounds in ways that never show up on a P&L.

Bug0 starts differently. Week 0, your forward-deployed QA engineer joins your Slack. Week 1, critical flows are covered. Week 4, full app coverage. $2,500/month flat. No hiring. No tool procurement. No six-month ramp. The FDE arrives pre-trained on Playwright and Bug0's AI platform.

Six months of zero coverage vs. week-one coverage. That's not a product comparison. It's a finance decision.

The cost-per-bug-caught calculator

Run this on your own team. It takes 10 minutes.

Step 1: Calculate annual regression suite cost

QA engineer hours/week on test maintenance × hourly rate × 52

+ Developer hours/week debugging flaky tests × hourly rate × 52

+ CI compute cost for regression runs × 12

+ Tool licenses (annual)

= Annual regression suite cost

Step 2: Count real regressions caught

Go to your bug tracker. Filter for bugs caught by automated regression tests in the last 12 months. Not "test failures." Real bugs that would have reached production without the test. Be honest. Most teams overcount by 2-3x because they include flaky test investigations that turned out to be nothing.

Step 3: Divide

Cost per bug caught = Annual cost ÷ Real regressions caught

Example: 10-engineer team, 2,500 regression tests

-

QA maintenance: 15 hrs/week × $60/hr × 52 = $46,800

-

Developer flake debugging: 8 hrs/week × $75/hr × 52 = $31,200

-

CI compute: $500/month × 12 = $6,000

-

Tool license: $5,000/year

-

Total: $89,000/year

Bugs caught by regression suite last 12 months: 35-50 real regressions.

Cost per bug caught: $1,780-$2,543

Some of those bugs were critical. Some were a button that moved 10 pixels. You paid the same for both.

Compare to managed QA

Bug0: $30K/year. Same regressions caught, plus the ones your flaky suite skips because someone muted the alert six months ago. Plus human judgment on every failure. Plus coverage that grows with your product instead of ahead of it.

Cost per bug caught drops 60-70%. And you didn't spend six months hiring, onboarding, and evaluating tools before catching your first one.

The ownership gap

Ask your team: who owns the regression suite?

Not "who runs the tests." Those run automatically. Who decides which tests should exist? Who prunes dead tests for deprecated features? Who adds coverage when you ship something new? Who decides whether a failure is a real bug or a flaky test? Who removes the test that's been skipped for four months because nobody wanted to fix it?

If the answer is "the team" or "everyone," nobody owns it. Shared ownership of a regression suite means shared neglect.

The pattern is predictable. The engineer who wrote the original suite leaves or moves to a different project. The suite keeps running. Tests accumulate. Nobody deletes anything because "what if we need it." Flaky tests get @skip annotations instead of fixes. The suite grows from 500 tests to 3,000 tests over 18 months. Maintenance cost triples. Bugs caught stays flat.

The companies that get regression testing right have one thing in common. A person whose job is regression outcomes. Not test count. Not coverage percentage. Outcomes. "Did we catch the regressions that matter before they reached production?"

That person is either a senior QA engineer you hire (6-month ramp, $130K-$150K/year fully loaded) or a forward-deployed QA engineer who shows up in your Slack on day one and owns it from week one.

What replaces the regression treadmill

The answer isn't more tests. It isn't better tools. It's a different model.

Outcome-based testing over script-based testing. "User can complete checkout" adapts when your checkout flow changes. page.click('#submit-btn') breaks. The first tests intent. The second tests implementation. Intent survives redesigns. Implementation doesn't.

Fewer tests, higher signal. 200 outcome-based tests covering critical flows catch more real regressions than 3,000 brittle scripts covering every edge case. The math from section 3 proves this. The first 200 tests do 80% of the work. Everything after that is diminishing returns at full maintenance cost.

Someone who owns regression, not someone who set up a tool. Tools don't decide which tests matter. Tools don't prune dead tests. Tools don't look at a failure and tell you whether to block the release or ignore the noise. Tools don't attend your sprint planning and ask "what regression coverage do we need for this feature?" A person does.

Bug0 is this model. Your forward-deployed QA engineer owns regression coverage end-to-end. They plan which tests matter. Generate them with AI. Prune the ones that don't add signal. Triage every failure with human judgment. Gate your releases. $2,500/month flat, everything included. Tests self-heal when your UI changes. Coverage grows with your product. You don't maintain a regression suite. You get regression confidence.

My co-founder wrote about why this model exists in "why I built a boring AI company". The short version: the future of QA isn't a shinier tool. It's someone who owns the outcome.

FAQs

What is regression testing?

Regression testing is re-running existing tests after code changes to verify that previously working functionality still works. The goal is to catch bugs introduced by new code before they reach production. It's one of the most important practices in software engineering. In 2026, the challenge isn't the concept. It's the economics: regression suites grow linearly while the bugs they catch plateau. For more on how AI is changing this equation, see our guide on AI automation testing.

What is regression testing in software?

In software development, regression testing means systematically re-executing tests against modified code to detect unintended side effects. It covers everything from unit tests (individual functions) to end-to-end tests (full user flows in a browser). The practice is especially critical for web applications where UI changes can break flows across multiple pages and user journeys.

What is regression testing in agile?

In agile environments, regression testing runs on every sprint or PR merge to catch bugs early. Agile teams typically automate regression tests and integrate them into CI/CD pipelines so they run on every code change. The challenge in agile is speed: full regression suites take 45-90 minutes, but agile teams ship multiple PRs per day. Selective regression testing (running only affected tests) helps, but requires accurate dependency mapping.

What is automated regression testing?

Automated regression testing uses scripts (typically Playwright, Selenium, or Cypress) to re-run tests without manual intervention. Automation solves the speed problem but not the strategy problem. You can run 5,000 tests in 20 minutes and still miss the bug that costs you a customer, because the test for that flow was skipped three months ago and nobody noticed. Automation handles execution. Coverage strategy, failure triage, and test pruning still require human judgment.

What is the difference between regression testing and retesting?

Retesting verifies that a specific known bug has been fixed. You found a bug, a developer fixed it, you re-run that specific test to confirm the fix works. Regression testing checks whether the fix (or any other change) broke something else. Retesting asks "is this bug fixed?" Regression testing asks "did fixing this bug create new ones?"

How much does regression testing cost?

Most teams budget $0 for regression testing because they treat it as "free" once the tests are written. The real cost includes QA engineer time maintaining tests (15-20 hrs/week), developer time debugging flaky failures (5-10 hrs/week), CI compute costs, and tool licenses. For a typical 10-engineer team with 2,500 tests, the annual cost is roughly $84K-$89K. The cost per bug caught typically lands at $1,700-$2,500. Bug0 replaces this for $30K/year flat with coverage from week one.

How many regression tests should you have?

Fewer than you think. Your first 200 tests covering critical user flows catch roughly 80% of regressions. Beyond 500-800 tests, the maintenance cost typically exceeds the value of additional bugs caught. The right number depends on your product's complexity, but the goal should be maximum signal per test, not maximum test count. If you're proud of having 3,000 tests, calculate the cost per bug caught first.

What's the alternative to maintaining a regression test suite?

Managed QA services where a dedicated engineer owns your regression coverage end-to-end. They decide which tests matter, generate them using AI, prune the ones that add noise, triage every failure, and gate your releases. You get regression confidence without owning the suite. Bug0 delivers 100% critical flow coverage in week one for $2,500/month. No hiring, no tool procurement, no six-month ramp.